Vectorless RAG Explained: The Next Evolution Beyond Embeddings

Discover how Vectorless Retrieval-Augmented Generation (RAG) works, how it differs from traditional vector-based RAG, and why it’s emerging as a powerful alternative for faster, more accurate AI systems.

The Retrieval Crisis in the Generative Era

Retrieval-Augmented Generation (RAG) has rapidly ascended as the definitive architecture for grounding large language models (LLMs) in external reality. By providing models with a "context window" filled with relevant data, businesses have solved the hallucination problem that once plagued early AI deployments. However, as we move into 2026, the industry is hitting a "Vector Wall."

Traditionally, RAG has relied exclusively on vector embeddings—mathematical representations of text that allow for semantic similarity searches. While revolutionary, this approach is fundamentally "approximate." It struggles with exact keywords, specialized terminology, and complex logical relationships. This is where Vectorless RAG enters the conversation, not as a replacement, but as a critical evolution for precision-first industries.

Key Takeaways

- Precision over Similarity: Vectorless RAG uses deterministic logic instead of probabilistic math.

- Infrastructure Efficiency: Eliminating vector databases reduces costs and deployment complexity.

- Explainable AI: Every retrieval step is auditable, providing a clear map of why a model reached a specific conclusion.

- Hybrid is the Future: The most resilient systems combine semantic vectors with symbolic retrieval.

Understanding the Vector Bottleneck

To appreciate the shift toward vectorless architectures, one must first understand the limitations inherent in the traditional vector-based flow. In a standard setup, documents are chunked, embedded into high-dimensional vectors, and stored in a specialized database. When a user queries the system, that query is also embedded, and the database returns the "nearest neighbors."

While this is excellent for broad, thematic queries ("Tell me about your sustainability policy"), it fails miserably for specific, data-heavy requests. If an accountant asks the system for "Q3 2024 EBITDA adjustments," a vector search might return a document about "General Financial Health" because they are semantically similar, but it will miss the exact table needed for the calculation.

If your AI system frequently returns 'close but wrong' answers for specific technical queries, you are likely suffering from vector similarity drift. A symbolic retrieval layer is often the missing ingredient.

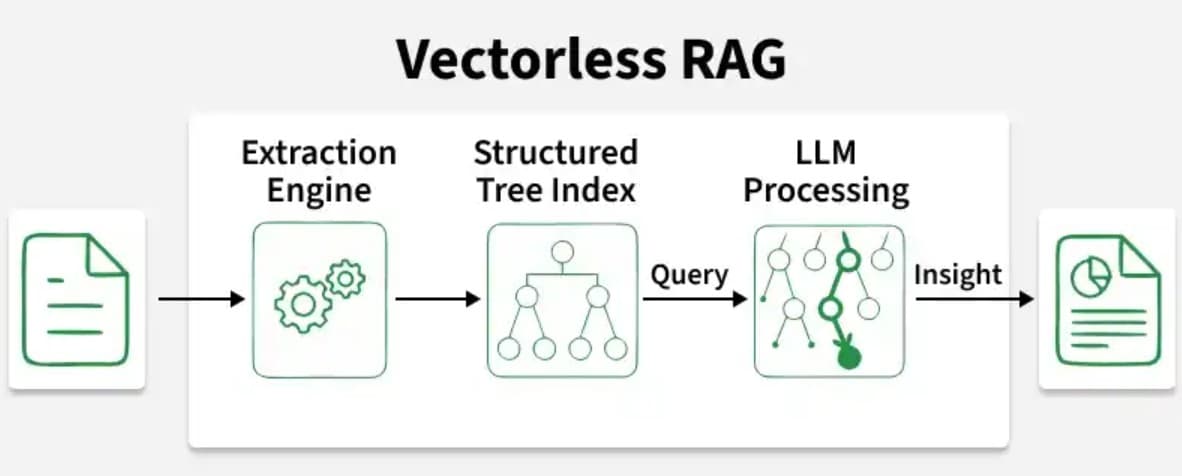

The Architecture of Certainty: Vectorless Retrieval

Vectorless RAG flips the script by returning to the fundamentals of information science: Structured, Symbolic, and Keyword-driven retrieval. Instead of asking "what looks like this query?", a vectorless system asks "what contains the specific logic or labels required by this query?"

By utilizing established technologies like BM25 for keyword ranking, SQL for structured data, and Knowledge Graphs for relationship mapping, Vectorless RAG provides a level of deterministic accuracy that embeddings cannot match. In the 2026 landscape, this is increasingly referred to as "Deterministic Grounding."

Why Global Enterprises are Pivoting

The move toward vectorless systems is driven by three core business imperatives:

- Speed and Latency: Vector search is computationally expensive at scale. Symbolic retrieval is nearly instantaneous, allowing for real-time AI responses in high-throughput environments.

- Cost Rationalization: Generating and storing millions of embeddings is a significant recurring cost. For many businesses, a well-indexed search engine provides 90% of the value at 10% of the price.

- Governance and Auditability: In regulated industries like finance and healthcare, "semantic similarity" is often a legal liability. Decision-makers need to know exactly which paragraph triggered an AI's response. Vectorless systems provide a clear, deterministic audit trail.

“The future of RAG isn't about more complex embeddings; it's about better logic. We are seeing a massive return to structured data as the primary source of truth for LLMs.”

Implementation: The Case for Hybridity

Does this mean vector databases are obsolete? Far from it. The most sophisticated AI deployments in the Australian market right now are utilizing Hybrid RAG. This architecture uses a "two-pass" system: it first uses vectorless retrieval to grab the specific, structured facts, and then uses semantic vectors to fill in the nuanced, contextual gaps.

For example, a medical AI might use symbolic search to find a patient's exact dosage history (zero room for error) and then use vector search to summarize contemporary research papers that might be relevant to the patient's symptoms (nuanced understanding).

Final Thoughts: Choosing the Right Foundation

As we look toward 2027, the choice of retrieval architecture will become the most significant technical decision for any AI team. For businesses prioritizing exactitude, cost-efficiency, and explainability, the vectorless path is no longer a niche alternative—it is the strategic choice for the next generation of intelligent systems.

Frequently Asked Questions

Does Vectorless RAG mean I don't need a database?

No. It means you don't need a *vector* database. You can use your existing PostgreSQL, Elasticsearch, or GraphDB systems, which are often already in your stack.

Is it harder to set up than traditional RAG?

Actually, it's often simpler. You don't have to manage embedding models or specialized vector infrastructure. If you have well-indexed documentation, you are already halfway there.

Can Vectorless RAG handle PDFs and messy text?

It's best suited for structured or well-tagged data. For raw, unstructured text blobs, vectors are still superior for understanding 'vibe' and 'topic,' which is why the Hybrid approach is recommended.

Leading the AI Evolution

At Agileitt, we specialize in building these advanced retrieval systems for Australian enterprises. If you're ready to move beyond basic chatbots and build a truly resilient, precision-first AI platform, let's discuss how a hybrid RAG architecture can transform your workflow.

Absolutely. It reduces:

- Infrastructure cost

- Complexity

- Time to deploy

👉 Making it ideal for lean teams.